How Large Language Models Work: From Data to Intelligent Conversation

Author : Ranga Technologies

Publish Date : 3 / 17 / 2026 • 2 mins read

Last Updated : 3 / 17 / 2026

Large Language Models (LLMs) such as GPT-4, Gemini, and Claude have redefined the boundaries of natural language understanding and generation. Their ability to produce coherent, context-aware, and often creative responses has revolutionized AI applications across industries. But beyond the surface, these models embody sophisticated architectures, training paradigms, and inference strategies that enable their remarkable capabilities. This blog explores the advanced workings of LLMs, focusing on their architectural innovations, training methodologies, and deployment nuances that power intelligent conversations.

Advanced Architecture: Transformers and Beyond

Multi-Head Self-Attention at Scale

At the core of modern LLMs lies the transformer architecture, which employs multi-head self-attention mechanisms. This allows the model to simultaneously attend to multiple positions within the input sequence, capturing complex dependencies and contextual relationships across long text spans.

-

Dynamic Contextualization: Each attention head learns to focus on different semantic or syntactic aspects, enabling nuanced understanding.

-

Layer Stacking and Residual Connections: Deep stacks of transformer layers with residual pathways facilitate gradient flow and enable the model to learn hierarchical representations of language.

Positional Encoding and Extended Context Windows

Since transformers process input in parallel rather than sequentially, they rely on positional encodings to retain word order information. Recent advances have extended context windows from a few thousand tokens to tens of thousands, allowing LLMs to maintain coherence over entire documents, conversations, or even multi-modal inputs.

Sparse and Efficient Attention Variants

To manage computational complexity, many LLMs incorporate sparse attention techniques that selectively attend to relevant tokens, reducing memory and compute demands without sacrificing performance. This enables scaling to trillion-parameter models while maintaining feasible inference times.

Sophisticated Training Paradigms

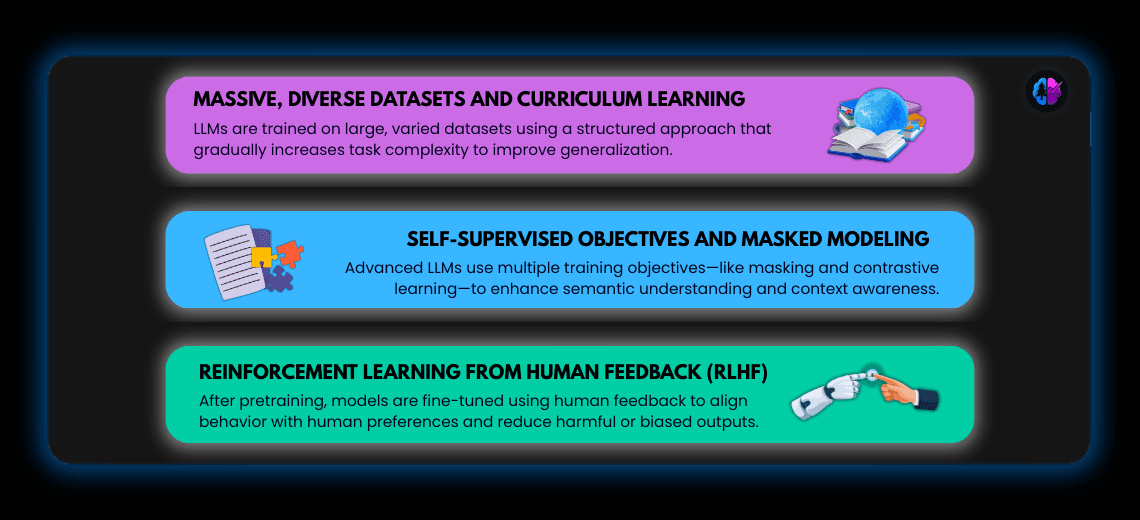

Massive, Diverse Datasets and Curriculum Learning

LLMs are trained on vast, heterogeneous corpora spanning books, articles, code repositories, and web data. Training often employs curriculum learning, where the model is exposed to increasingly complex tasks or data distributions, enhancing generalization and robustness.

Self-Supervised Objectives and Masked Modeling

Beyond simple next-token prediction, advanced LLMs integrate multiple objectives such as masked language modeling, span prediction, and contrastive learning to deepen semantic understanding and contextual reasoning.

Reinforcement Learning from Human Feedback (RLHF)

Post-pretraining, LLMs undergo fine-tuning with RLHF, where human evaluators rank outputs and guide the model toward preferred behaviors. This process improves alignment with human values, reduces toxic or biased outputs, and enhances response relevance.

Inference and Generation: From Tokens to Conversations

Probabilistic Decoding Strategies

LLMs generate text token-by-token, utilizing probabilistic decoding methods such as beam search, top-k sampling, or nucleus sampling to balance coherence, diversity, and creativity in responses.

Contextual Conditioning and Prompt Engineering

The model’s output is heavily influenced by prompt design, enabling in-context learning where the model adapts behavior based on examples or instructions embedded in the input without explicit retraining.

Multi-Modal and Tool-Augmented Generation

Cutting-edge LLMs integrate multi-modal inputs (text, images, audio) and can invoke external tools or APIs during inference, effectively acting as autonomous agents capable of complex tasks beyond pure text generation.

Deployment Considerations: Efficiency and Safety

Model Compression and Distillation

To deploy LLMs at scale, techniques like model pruning, quantization, and knowledge distillation reduce model size and latency while preserving performance, enabling real-time applications on diverse hardware.

Content Moderation and Bias Mitigation

Robust pipelines incorporate content filters, bias detection algorithms, and adversarial testing to minimize harmful or misleading outputs, ensuring responsible AI deployment.

Explainability and User Transparency

Efforts to improve model interpretability help users understand AI decisions, fostering trust and enabling better human-AI collaboration.

The Future Trajectory of LLMs

-

Greater Efficiency: Innovations in architecture and hardware will drive more sustainable training and inference.

-

Enhanced Reasoning: Models will better handle multi-step logic, causal inference, and domain-specific knowledge.

-

Richer Interaction: Integration of voice, vision, and real-time data will create immersive, multi-modal AI experiences.

-

Personalization: LLMs will adapt dynamically to individual user preferences and contexts, delivering tailored assistance.

Conclusion

Modern Large Language Models are the product of intricate architectures, massive data-driven training, and sophisticated inference mechanisms. Their ability to generate contextually rich, human-like text arises from deep attention mechanisms, advanced training paradigms like RLHF, and efficient deployment strategies. As these models continue to evolve, they will underpin increasingly intelligent, interactive, and responsible AI systems that transform how we communicate, create, and solve problems.